How Can We Help?

Classification Performance

How do I evaluate the performance of my Classification pipeline?

Once you’ve built a Classification pipeline, Cortex makes it easy to explore results and learn more about your pipeline’s predictive power. In this guide, we’ll show you how to evaluate the performance of a Classification pipeline in Cortex.

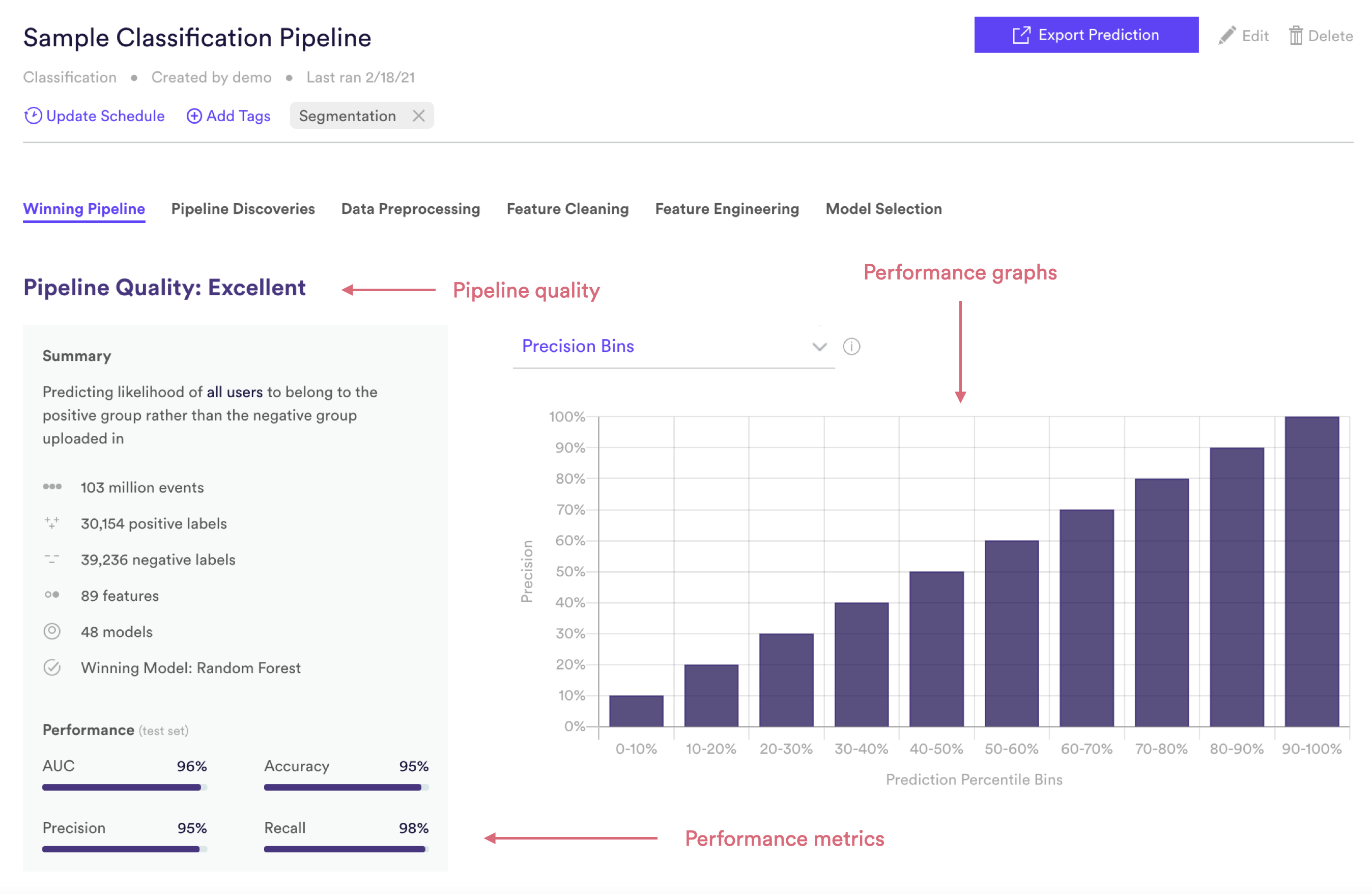

Cortex summarizes performance of any pipeline in three ways: Pipeline Quality, Performance Metrics, and Performance Graphs. The following sections describe how to interpret each of these specifically for a Classification pipeline.

Classification Pipeline Quality

Pipeline Quality gives you a quick sense for your pipeline’s performance without having to scrutinize any technical metrics. A pipeline’s Quality is meant to serve as a rough guide for how well its predictions match reality, but in truth what constitutes good performance depends on the difficulty of the problem – sometimes “Average” actually represents the best possible performance that can be achieved with the data at hand.

For Classification, Pipeline Quality is determined based on AUC, a common measure of performance for binary classification machine learning. AUC is described in more detail in the Metrics section below.

| Pipeline Quality | AUC |

| Excellent | >85% |

| Very Good | 75-85% |

| Good | 65-75% |

| Average | 55-65% |

| Below Average | <55% |

Classification Performance Metrics

Cortex publishes four well-known performance metrics for each Future Events pipeline. Each of these metrics is computed on a test set — that is, a random subset of your uploaded labels that Cortex withheld from training. This allows us to measure performance on data your pipeline has never seen before.

To frame these metrics in real terms, consider a Classification pipeline which predicts each user’s likelihood of being a homeowner (positive group), as opposed to a non-homeowner (negative group). Note however that your Cortex account can be configured to make predictions about any type of object tied to your event data (e.g. commerce items, media content, home listings, etc.).

AUC

AUC (or AUROC, short for Area Under the Receiver Operating Characteristics curve) is one of the most commonly-used measures of classification performance. It is represented as a percentage from 0-100%. The higher your pipeline’s AUC, the better its predictive power.

AUC is derived from the ROC curve (read here for more details), but can be interpreted in a more intuitive way: if a positive and negative label are both drawn at random, what is the probability that the positive label was given a higher prediction than the negative label? In our example above, a positive label is a user who is a known homeowner, while a negative label is a user who is known not to be a homeowner.

Precision

Of all the users that your pipeline predicted to be in the positive class, what percentage were actually in the positive class? In terms of our example, of all the users predicted to be a homeowner, what percent actually are? Read here for more details.

Recall

Of all the users that were actually in the positive class, what percentage did your pipeline predict to be in the positive class? In terms of our example, of all the users who is a known homeowner, what percent were predicted as such? Read here for more details.

Accuracy

What percent of all users did your pipeline classify correctly across both the positive and negative classes? In terms of our example, what percent of all predictions were correct (for both users who were and were not predicted to be homeowners)? Read here for more details.

*Note: Precision, recall, and accuracy are reported at the maximum F1 score of each Future Events pipeline’s precision-recall curve (described in the Performance Graphs section below).

Classification Performance Graphs

Like the performance metrics described above, performance graphs are generated based on data from a test set — that is, a random subset of your uploaded labels that Cortex withheld from training. This allows us to measure performance on data your pipeline has never seen before.

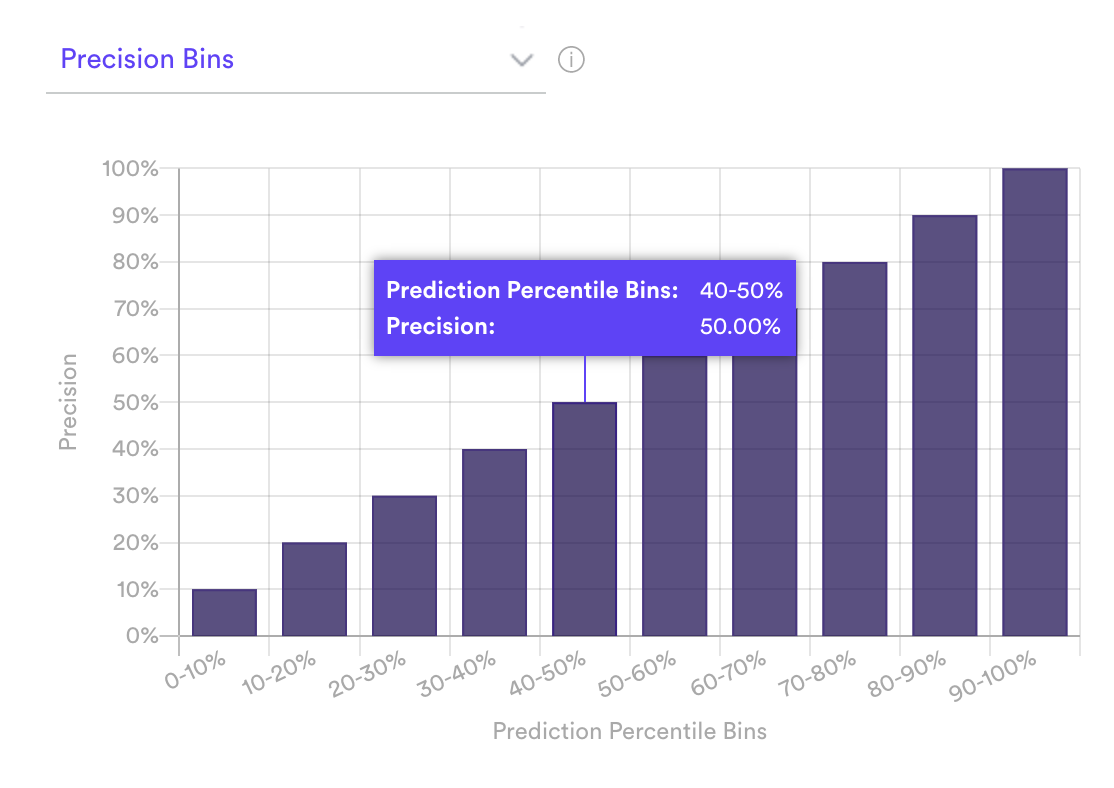

Precision Bins

The Precision Bins graph visualizes how well your pipeline is able to rank users based on their likelihood of belonging to the positive group. To do this, Cortex first sorts all the test set users in order of predicted Classification score, and divides them into 10 equally-sized bins. The first bin contains the 10% of users with the lowest predictions, the tenth bin contains the 10% with the highest predictions. Cortex then observes what percent of users in actually belong to the positive group (i.e. precision), and visualizes these results in the Conversion Rate Bins graph.

If your pipeline’s predictions are accurate, precision will increase steadily and sharply from bin 1 to bin 10. In terms of our example pipeline, this pattern would indicate that the users Cortex identified as likely homeowners actually appear in the uploaded group of known homeowners more often than users identified as unlikely to be homeowners.

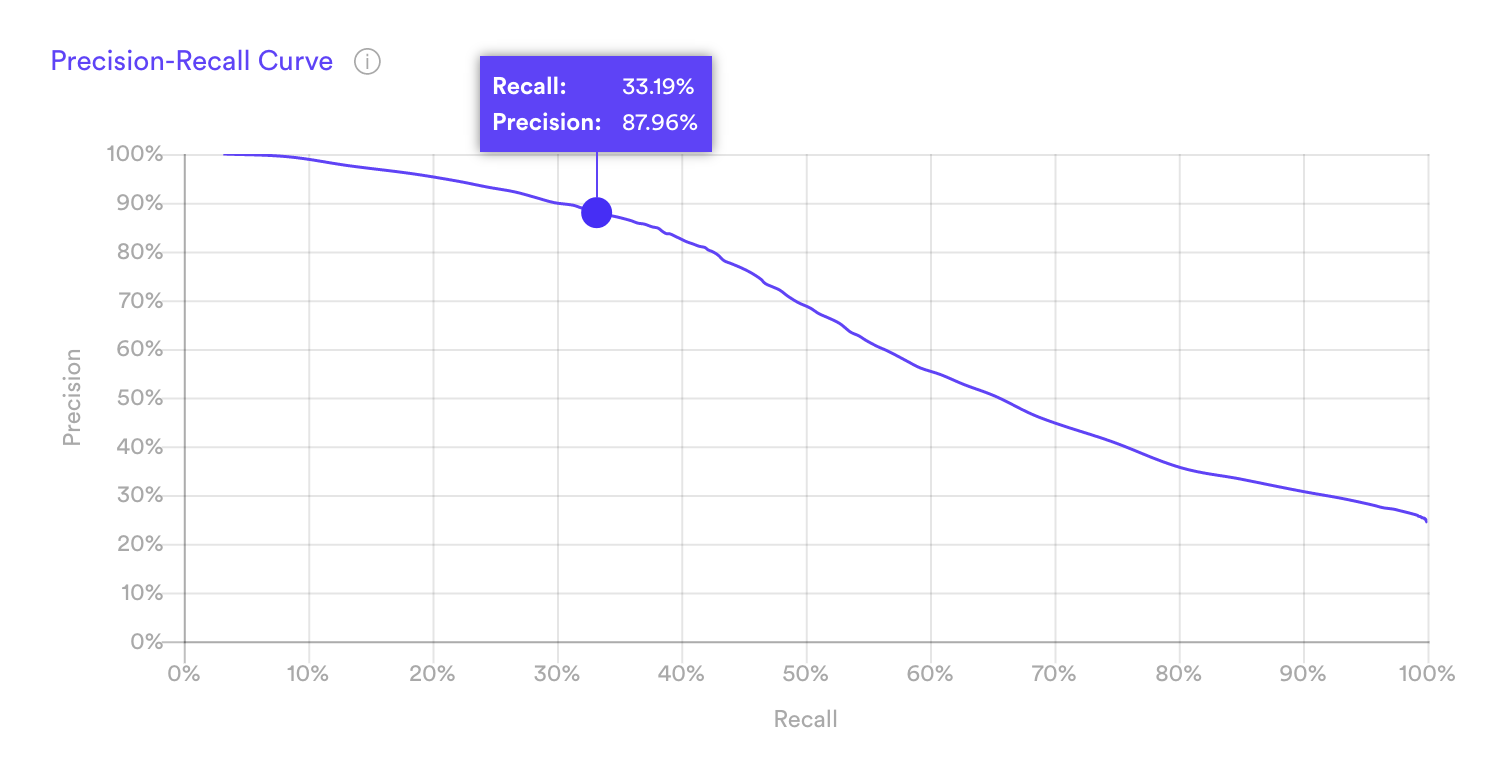

Precision-Recall Curve

The precision-recall curve measures the tradeoff between two important ML metrics, as the threshold for a positive class prediction changes. The more accurate your pipeline, the larger the area beneath this curve.

For a given pipeline, precision and recall are often inversely related — improving one usually means sacrificing the other. To understand why, note first that Cortex’s Classification predictions take the form of a “model score” between 0 and 1.

| user_id | model_score |

| ABC | 0.941 |

| DEF | 0.897 |

| … | … |

| UVW | 0.0212 |

| XYZ | 0.0192 |

In reference to the above table:

- This sample table is sorted in descending order of users’ model scores.

- User ABC is predicted as most similar to users in the positive class.

- User XYZ is predicted as least similar to users in the positive class.

Model scores are useful in that they tell you something about the confidence of each prediction. But precision and recall measure whether a pipeline’s predictions are right or wrong, so in order to compute these metrics we must first set a model score threshold. Any user scored above this threshold is considered a positive class prediction (e.g. predicted to be a homeowner), and any user below this threshold is considered a negative class prediction (e.g. predicted to not be a homeowner).

Raising this threshold is likely to increase our pipeline’s precision but lower its recall. Imagine setting a high threshold such that only a few high-confidence users are considered positive predictions (say, those with model scores above 0.95). Our pipeline would get a lot of these predictions “right” (i.e. have high precision), but would miss out on many other actual positives (i.e. have low recall). If we set a low threshold so that most predictions are considered positive (say, those with model score above 0.1), many of these predictions will be incorrect (low precision), but we’d correctly capture almost all the actual positives (high recall).

The Precision-Recall Curve measures this tradeoff as we vary the model score threshold from high (left side of the curve) to low (right side).

Related Links

- Building a Classification Pipeline

- Look Alike Pipeline Performance

- Regression Pipeline Performance

- Future Events Pipeline Performance

- Uplift Pipeline Performance

- Recommendations Pipeline Performance

Still have questions? Reach out to support@mparticle.com for more info!