How Can We Help?

What is a Machine Learning Pipeline?

What is a Machine Learning Pipeline?

More and more businesses are collecting data with an eye toward Machine Learning (ML). But while most ML algorithms can only interpret tidy datasets, real-world data is usually messy and unstructured. Cortex bridges this gap through a multi-step framework which automatically organizes and cleans raw data, transforms it into a machine-readable form, trains a model, and generates predictions — all on a continuous basis. Collectively, we refer to these steps as a Machine Learning Pipeline.

In this guide, we describe the benefits of an Machine Learning Pipeline, and explain why each step is necessary for your business to deploy a scalable ML solution.

Benefits of a Machine Learning Pipeline

Cortex’s end-to-end Machine Learning Pipelines enable several key advantages for our partners:

(1) Make continuous predictions

Unlike a one-time model, an automated Machine Learning Pipeline can process continuous streams of raw data collected over time. This allows you to take ML out of the lab and into production, creating a continuously-learning system that’s always learning from fresh data and generating up-to-date predictions for real-time optimization at scale.

(2) Get up and running right away

Building ML internally typically takes longer and costs more than anticipated. Worse, Gartner shows that more than 80% of ML projects ultimately fail. Even if a business defies these odds, it often has to start from scratch for its next ML project. By automating every step of the Machine Learning Pipeline, Cortex allows teams to get started faster and cheaper than the alternatives. What’s more, Cortex provides a foundation for iterating and expanding on your ML goals – once data is flowing into your account, you can build a new Machine Learning Pipeline in a matter of minutes.

(3) Accessible to every team

By automating the hardest parts and wrapping the rest in a simple interface, Cortex makes ML accessible to teams of varying technical abilities. This means you can put ML in the hands of the business stakeholders who’ll actually use the predictions, and free up your data science team to focus on bespoke modeling.

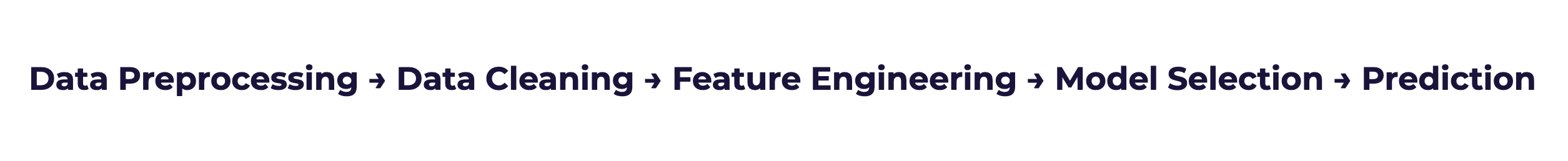

Machine Learning Pipeline Steps

Each Cortex Machine Learning Pipeline encompasses five distinct steps. To frame these steps in real terms, consider a Future Events Pipeline which predicts each user’s probability of purchasing within 14 days. Note however that your Cortex account can be configured to make predictions about any type of object tied to your event data (e.g. commerce items, media content, home listings, etc.).

Step 1: Data Preprocessing

The first step in any pipeline is data preprocessing. In this step, raw data is gathered and merged into a single organized framework. Cortex comes equipped with various connectors for ingesting raw data, creating a funnel which loads information into Cortex from across your business.

Cortex has the ability to ingest multiple data sets from multiple data sources. In other words, you can send User Events separately from User Attributes. And, even within the User Events dataset, you can send Mobile events in a separate feed than Web events. Regardless of its type or source, Cortex will automatically merge all your data into a single unified view.

For example, to build our example pipeline we may want to incorporate a combination of user event data (e.g. transactions), user attribute data (e.g. demographics), and inventory attribute data (e.g. item categories). In the preprocessing step, Cortex will continuously load data from each of these three sources and merge them to get a comprehensive view of each user’s behavior.

Read this article for more information about Cortex’s data preprocessing step.

Step 2: Data Cleaning

Next, this data flows to the cleaning step. To make sure the data paints a consistent picture that your pipeline can learn from, Cortex automatically detects and scrubs away outliers, missing values, duplicates, and other errors.

In terms of our example pipeline, Cortex’s data cleaning module might automatically find and remove duplicate transactions that might otherwise lead to less accurate predictions.

Read this article for more information about Cortex’s data cleaning step.

Step 3: Feature Engineering

Feature Engineering is the process of transforming raw data into features that your pipeline will use to learn. A feature is simply a way to describe something quantifiable about your objects (e.g. users).

In terms of our example pipeline, imagine that Cortex has processed and cleaned a stream of user click events over time. In the feature engineering step, Cortex might transform this raw data into a feature which describes each user’s total number of total clicks over the past 7 days. Other transformations are applied across all your events and attributes to engineer hundreds of predictive features for your pipeline.

Feature engineering is typically the most challenging and critical step in the Machine Learning Pipeline – your pipeline must not only choose which features to generate from an infinite potential pool, it must also crunch through huge amounts of data to build them.

Read this article for more information about Cortex’s feature engineering step.

Step 4: Model Selection

Next, Cortex automatically trains, tests, and validates hundreds of ML models using the features built in Step 3. Each model is supplied a set of labeled examples, and tasked with learning a general relationship between your features and your goal. The models are then evaluated on a fresh set of data that wasn’t used during training, and the best performer is selected as the winning model.

For example, Cortex would train our example pipeline using examples of users that did and did not purchase over a 14-day period in the past. The models will then learn which feature patterns tend to be associated with purchasers vs. non-purchasers. Once trained, predictions from each model are tested on a holdout set of examples to see which one can predict most accurately.

Read this article for more information about Cortex’s model selection step.

Step 5: Prediction Generation

Once the winning model has been selected, it is then used to make predictions across all your objects (e.g. users). Your predictions will take different forms depending on which type of pipeline you’ve built –

- A Future Events pipeline outputs a conversion probability for each user

- A Regression pipeline outputs a continuous value for each user

- Look Alike and Classification pipelines output a score between 0-1 which represents how similar each user is to your positive labels

- A Recommendations pipeline outputs a ranked list of items for each user, along with a score for each item indicating Cortex’s certainty that the user will interact with that item next. Scores across all item values sum to 1 for each user.

In terms of our example pipeline, Cortex would (1) compute the most current values for the features chosen in Step 3, and (2) run these features through the winning model selected in Step 4. The result would be a prediction indicating each user’s probability of purchasing in the next 14 days.

Read this article for more information about Cortex’s prediction step.

Related Links

Still have questions? Reach out to support@mparticle.com for more info!